AI Governance Framework: From Adoption to Effective Practice

88% of financial services firms using AI have no formal AI risk management framework. EU AI Act high-risk obligations bind from August 2026. The window to act is narrowing fast.

Source: ACA Group / NSCP, 2024 AI Benchmarking Survey.

Aegis Compass | AI Governance™

AI Governance is Lagging Behind AI Adoption

Seventy-five per cent of UK financial services firms now use AI. Regulators in the EU, UK, US, Singapore, and beyond have responded. Binding obligations, sector-specific expectations, and live enforcement are now in play. So the question is no longer whether regulations apply to AI. It is whether your firm can show it governs AI effectively, not just in policy but in practice.

Aegis Compass | AI Governance™ is the Argus Pro assessment framework for AI governance and regulatory compliance. It is built on ISO/IEC 42001:2023. It aligns with the EU AI Act, the FCA Mills Review of AI in financial services, the US Treasury FS AI RMF, MAS FEAT Principles, the Colorado AI Act, and equivalent obligations across jurisdictions. Compliance leaders get a structured view of where they stand. They also get a clear picture of what it will take to close the gap.

75%

of UK financial services firms now use AI in some form

From Principles to Obligations: The AI Regulatory Shift

For years, AI governance in financial services relied on voluntary frameworks and principles-based guidance. That era is ending. Regulators are now enforcing binding obligations across the jurisdictions where our clients operate.

European Union: The AI Act (Regulation (EU) 2024/1689) took effect on 1 August 2024. Prohibitions on unacceptable-risk practices applied from February 2025. Rules for general-purpose AI models bind from August 2026. Credit scoring, fraud detection, and automated customer decisioning sit in the high-risk category. They require mandatory risk assessments, data governance, human oversight, and conformity reviews before deployment.

United Kingdom: The FCA has stated it will not introduce AI-specific rules in the near term. Firms must instead apply existing frameworks. These include Consumer Duty, the Senior Managers and Certification Regime, and model governance obligations. The Mills Review of AI in financial services is expected to report in 2026. The diligence expected matches that for any other regulated activity.

Singapore and Hong Kong: MAS FEAT Principles and the Veritas Toolkit set substantive expectations for AI in banking and insurance. The HKMA has issued principles for the responsible use of AI in banking. Japan's AI Promotion Act came into force in June 2025.

United States: Federal regulation remains fragmented. State-level legislation in Colorado, California, and New York is shaping a complex landscape. Sector-specific guidance from the SEC, FDIC, OCC, and the US Treasury FS AI RMF adds further obligation.

International: Frameworks and risk assessments from the Financial Stability Board, IOSCO, and the OECD are influencing supervisory expectations. This is true even where binding rules are not yet in force.

Compliance Gaps That Regulators Are Already Examining

Governance and explainability are central to the EU AI Act, FCA Consumer Duty, and MAS FEAT Principles. Regulators are now asking specific questions about AI-driven decision-making. They want to know how bias is tested and mitigated. They also want clarity on how senior managers oversee complex AI systems.

These concerns are not theoretical. The FCA's January 2025 research uncovered systematic risks in credit scoring models used across the industry. The EU AI Act classifies credit scoring and fraud detection as high-risk. Firms with existing AI deployments in these areas may already be carrying compliance gaps. With obligations binding in 2026, the time for action is now.

A Structured View of Where Your AI Governance Stands, and What It Will Take To Close The Gap

Aegis Compass | AI Governance™ targets compliance leaders and boards overseeing AI governance in regulated firms. It focuses on governance rather than auditing technical aspects of AI systems. By providing a structured and cross-jurisdictional view, it helps assess how well a firm's AI practices align with compliance obligations.

The framework is based on ISO/IEC 42001:2023, the global standard for AI management systems. Additionally, it is adjusted to meet binding regulatory guidelines across various jurisdictions. This ensures relevance and adherence to diverse requirements.

Aegis Compass | AI Governance™ covers the full span of AI governance obligations. It addresses board-level accountability, AI inventory and risk classification, and policy and standards alignment. It covers data governance for AI, model validation and testing, and deployment and change management. Human oversight, AI-specific cybersecurity, and transparency obligations sit alongside fundamental rights, anti-discrimination, and contestation. Foundation models, generative AI, and agentic AI are addressed as discrete obligation classes. Vendor risk, AI literacy, and continuous improvement complete the picture.

Unlike ISO/IEC 42001:2023 certification, which confirms that an AI management system is in place, Aegis Compass | AI Governance™ measures whether that system is working effectively in practice. It assesses both maturity and effectiveness against a common baseline, enabling comparison and benchmarking.

Built for the Accountable, Not the Model Builders.

The Argus Pro Ecosystem

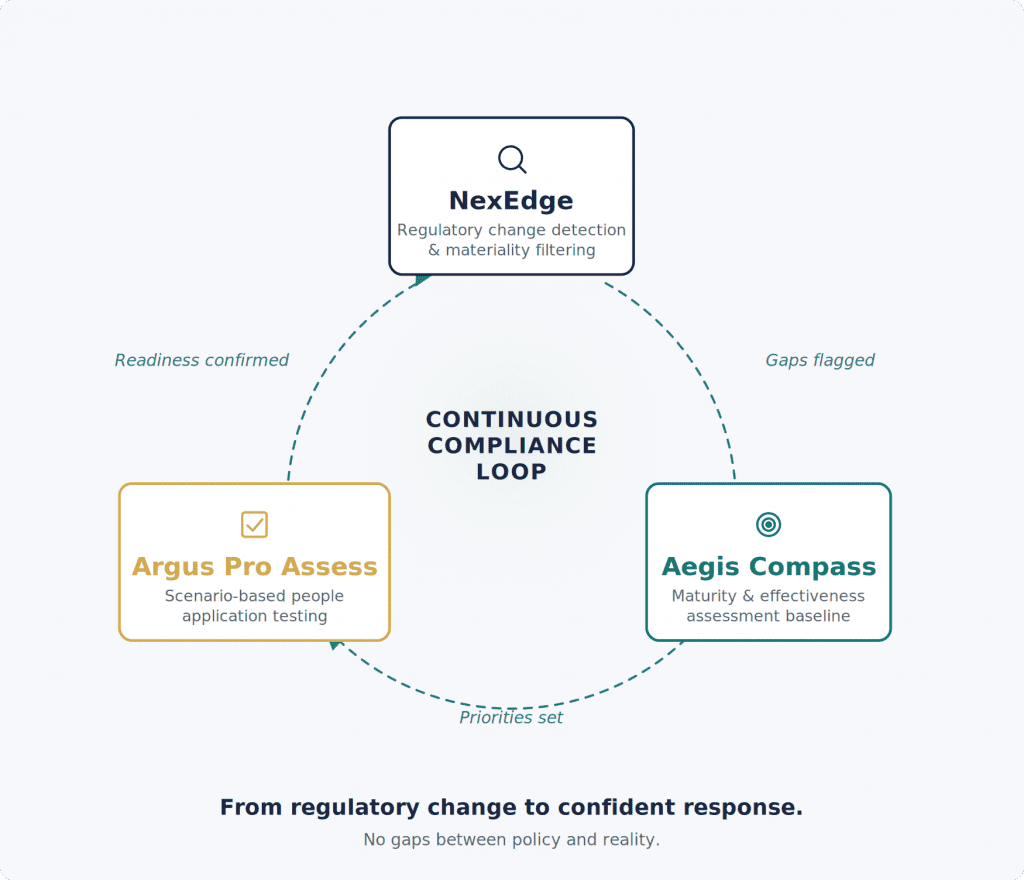

Argus Pro's platforms form a connected ecosystem for continuous compliance management:

- Aegis Compass | AFC™ – Anti-Financial Crime compliance assessment

- Aegis Compass | CDOR™ – Cybersecurity and Digital Operational Resilience assessment

- Aegis Compass | AI Governance™ – AI governance and regulatory compliance assessment

- Aegis Compass | ESG™ – ESG compliance at the intersection of financial crime and operational resilience

- NexEdge™ – Regulatory change management platform

- Argus Pro Assess, powered by Traverse™ – Scenario-based capability assessment

Learn More about Aegis Compass | AI Governance™

48% of firms have formal AI governance committees. Only 28% test or validate AI outputs. Just 24% have policies covering third-party AI use. Business Wire.

Progress is visible. The structural governance gaps are not. Don't get caught out. Act now.

ACA Group & National Society of Compliance Professionals (NSCP) Publication: 2025 AI Benchmarking Survey.

Related

Aegis Compass | AFC™

Measure the effectiveness of your Anti-Financial Crime programme across 30 jurisdictions.

Learn more

Aegis Compass | CDOR™

Assess your Cybersecurity and Digital Operational Resilience position across 30 jurisdictions, including DORA, NIS 2, and the UK CBEST framework.

Learn more

NexEdge™

Regulatory intelligence that tracks, checks, and alerts, so that your compliance position keeps pace with a regulatory landscape that does not stand still.

Learn more

Disclaimer: Argus Pro is not an auditor and does not provide audit opinions; our frameworks are not audits. Our frameworks support readiness, prioritisation and improvement planning.